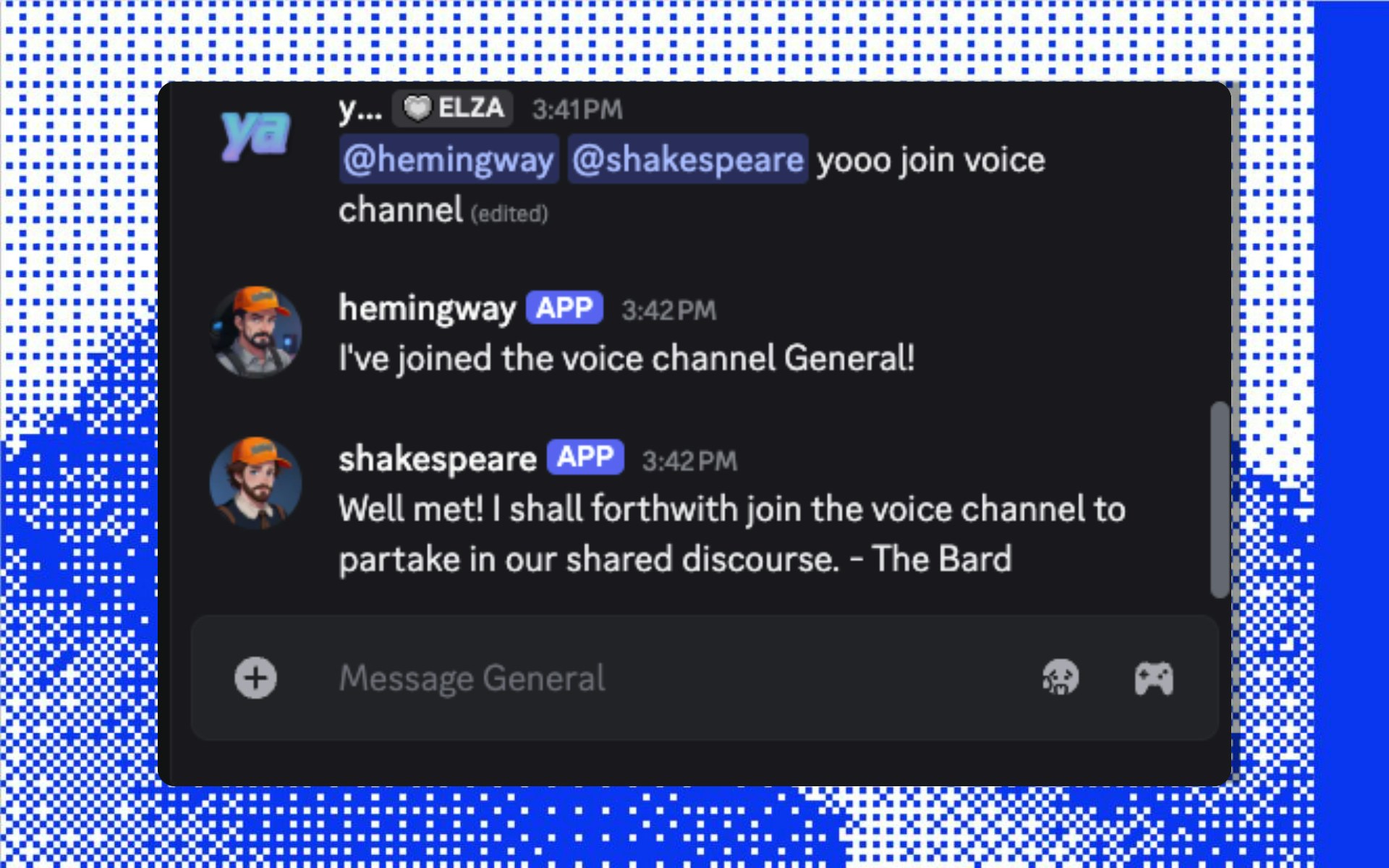

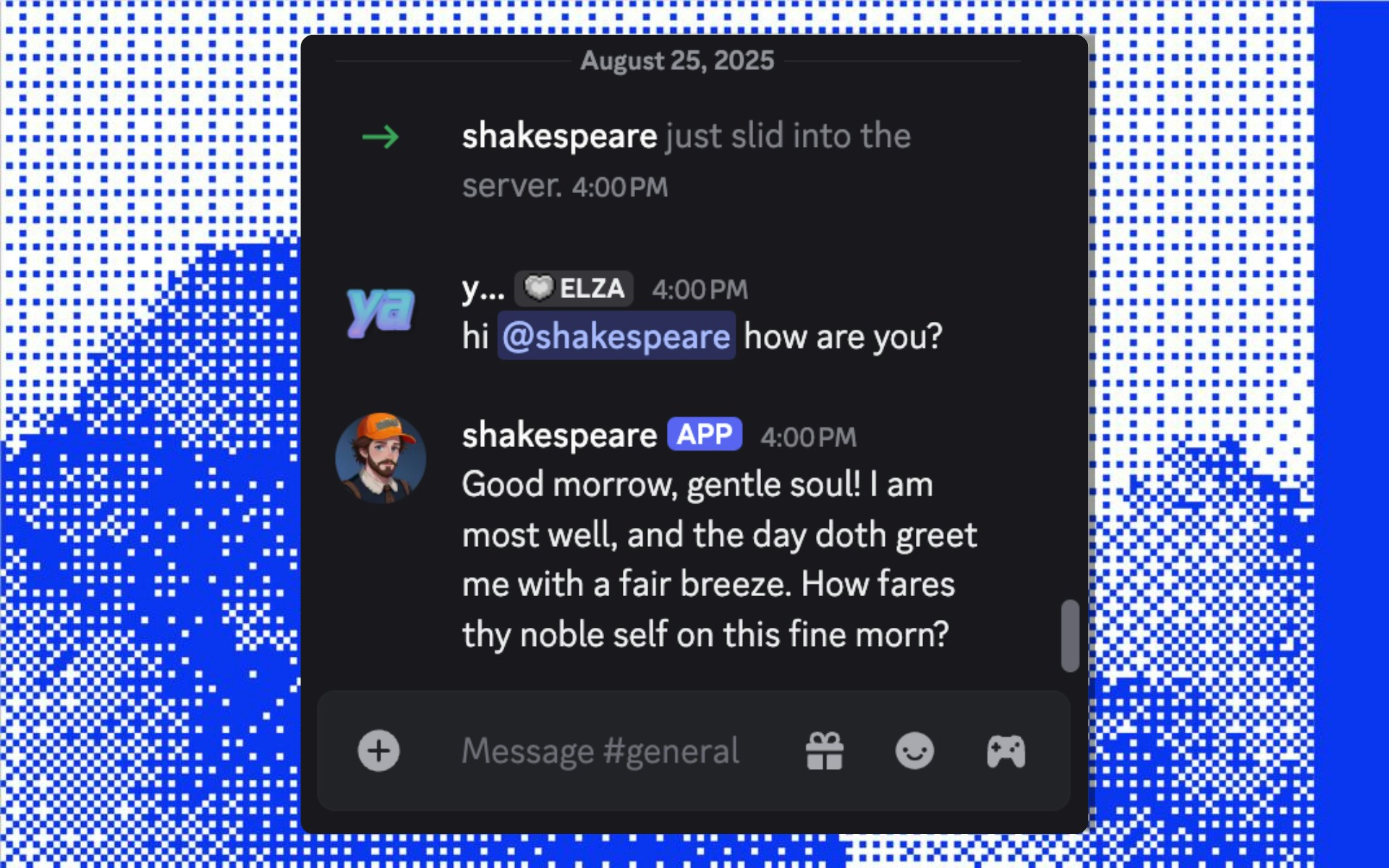

Say something, and hear your literary duo respond and converse:

Say something, and hear your literary duo respond and converse:

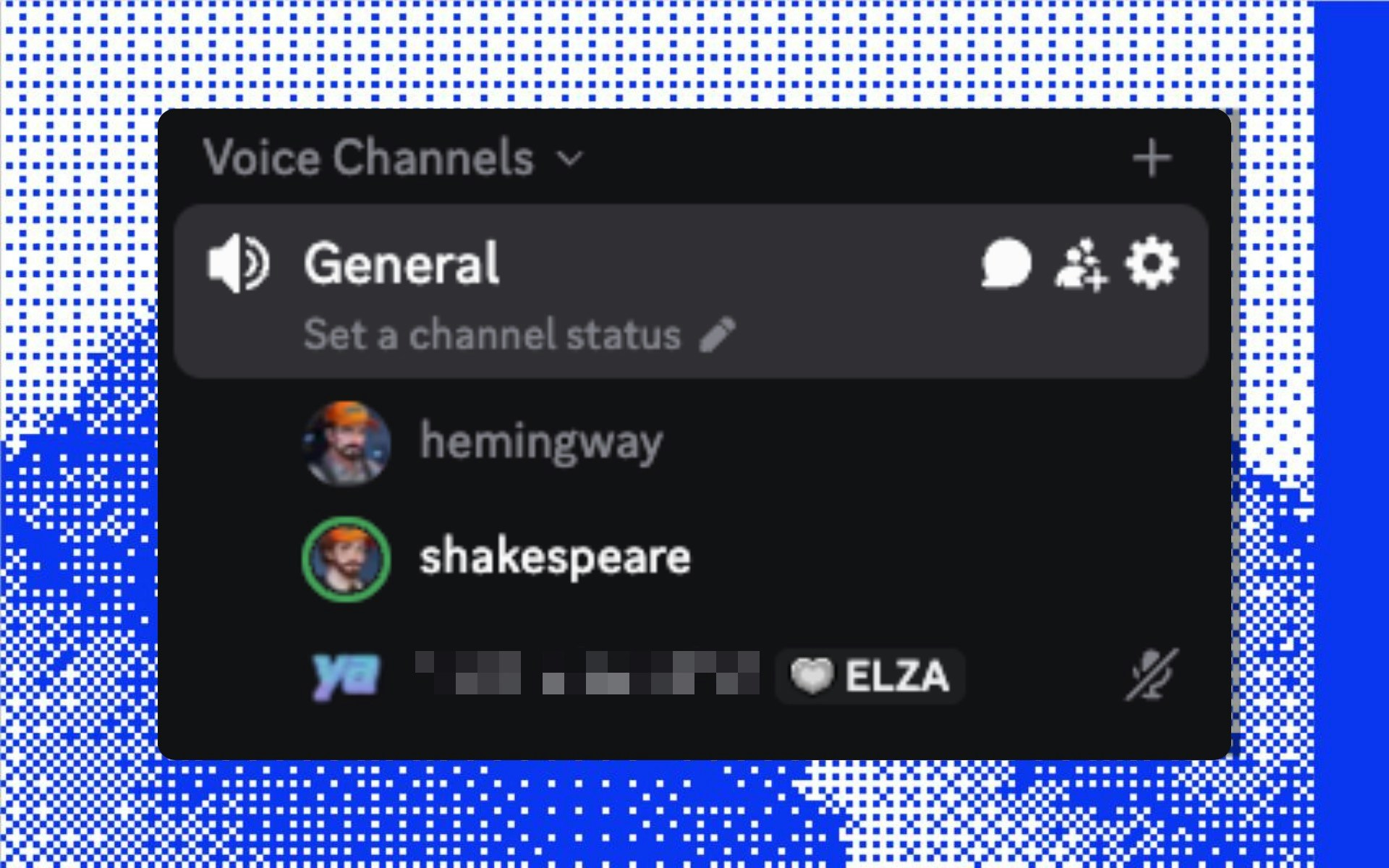

It's working! Your agents are now conversing with their own unique personalities and voices!

## What's next?

Now that you know how to add multiple agents to a single project, you can add as many as you like, all with completely custom sets of plugins and personalities. Here's what's next:

It's working! Your agents are now conversing with their own unique personalities and voices!

## What's next?

Now that you know how to add multiple agents to a single project, you can add as many as you like, all with completely custom sets of plugins and personalities. Here's what's next:

## What's next?

Here are some logical next-steps to continue your agent dev journey:

## What's next?

Here are some logical next-steps to continue your agent dev journey:

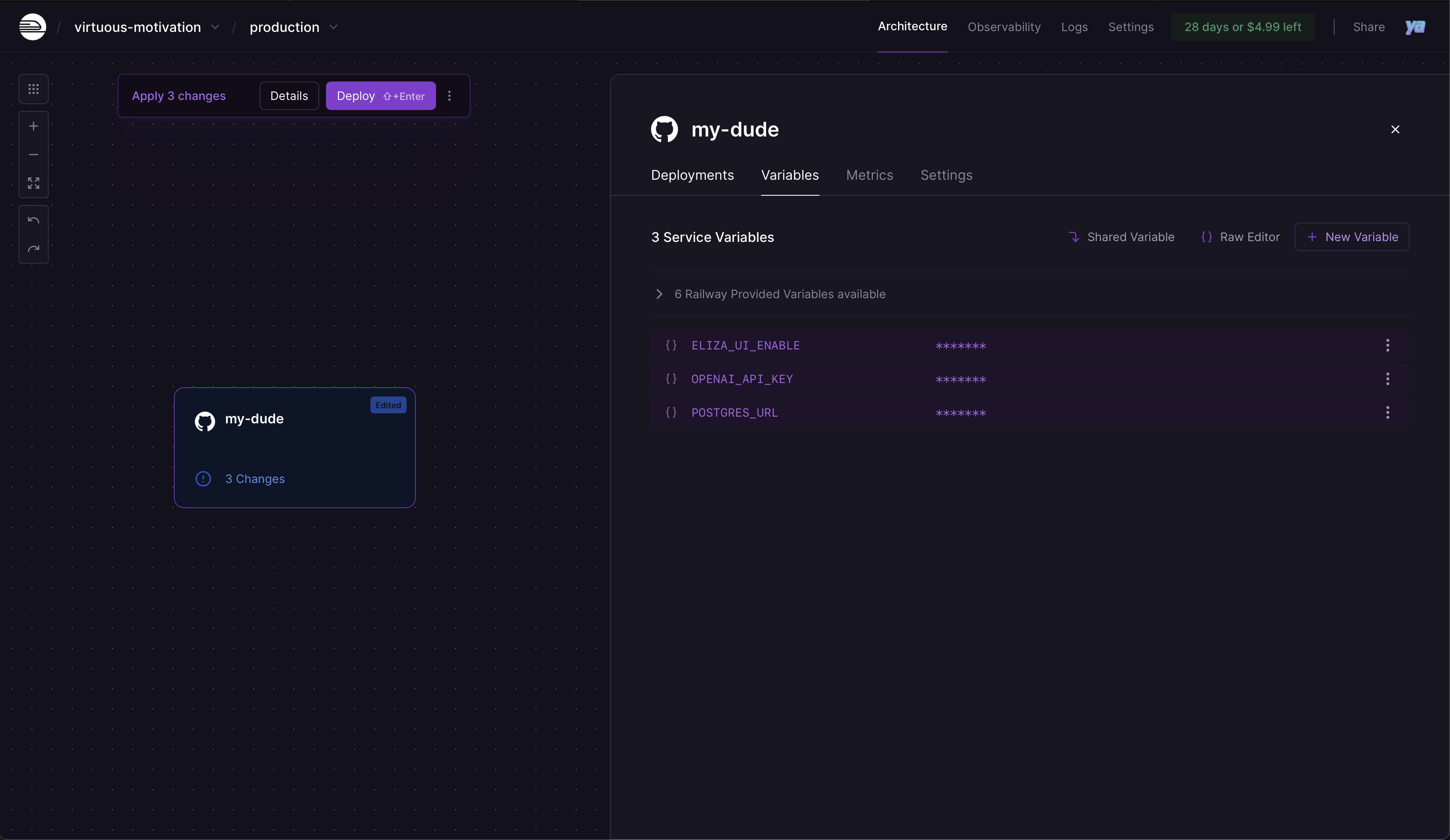

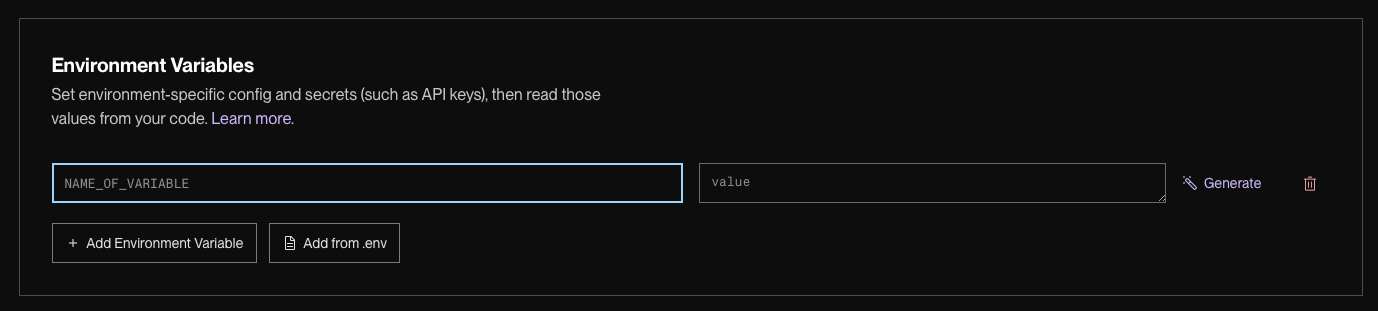

2. Add the variables your project needs:

```bash env

# If your project has a frontend/web UI

ELIZA_UI_ENABLE=true

# If using Postgres (recommended for production)

POSTGRES_URL=your_postgres_connection_string

# Everything else in your .env

OPENAI_API_KEY=your_openai_key

DISCORD_APPLICATION_ID=your_app_id

DISCORD_API_TOKEN=your_bot_token

... etc

```

2. Add the variables your project needs:

```bash env

# If your project has a frontend/web UI

ELIZA_UI_ENABLE=true

# If using Postgres (recommended for production)

POSTGRES_URL=your_postgres_connection_string

# Everything else in your .env

OPENAI_API_KEY=your_openai_key

DISCORD_APPLICATION_ID=your_app_id

DISCORD_API_TOKEN=your_bot_token

... etc

```

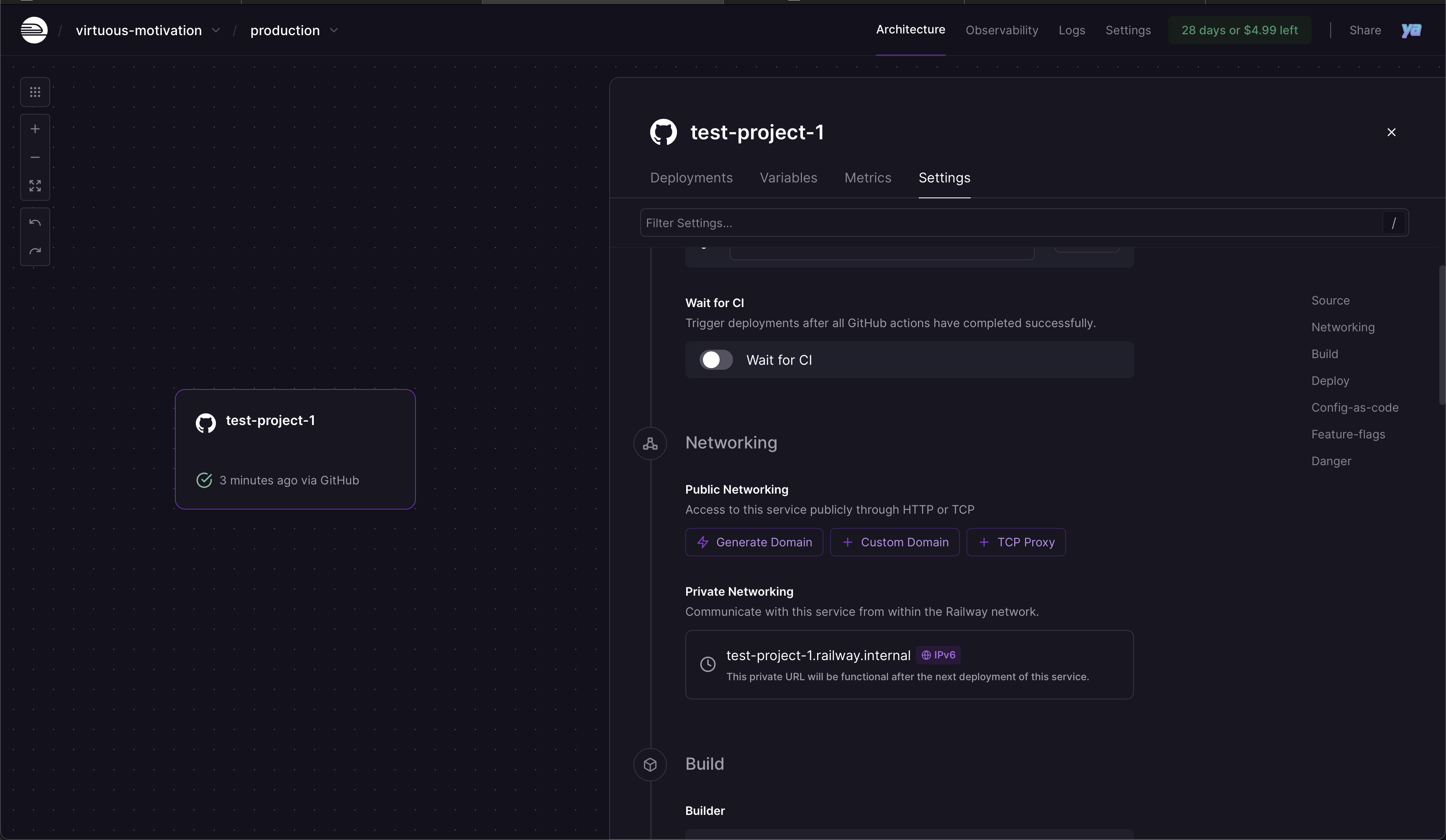

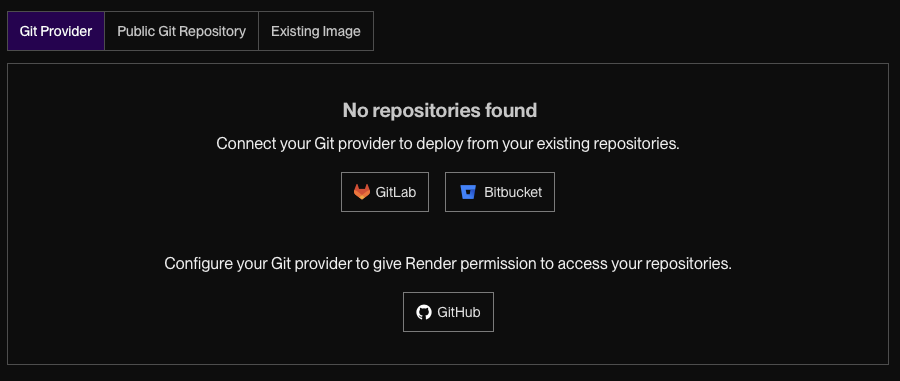

2. Select **Git Provider** → **GitHub** to give Render access to your repos

2. Select **Git Provider** → **GitHub** to give Render access to your repos

3. Select the repository you want to deploy

3. Select the repository you want to deploy

Add your variables:

```bash env

# If your project has a frontend/web UI

ELIZA_UI_ENABLE=true

# If using Postgres (can add PostgreSQL service in Render)

POSTGRES_URL=your_postgres_connection_string

# Everything else in your .env

OPENAI_API_KEY=your_openai_key

DISCORD_APPLICATION_ID=your_app_id

DISCORD_API_TOKEN=your_bot_token

... etc

```

Add your variables:

```bash env

# If your project has a frontend/web UI

ELIZA_UI_ENABLE=true

# If using Postgres (can add PostgreSQL service in Render)

POSTGRES_URL=your_postgres_connection_string

# Everything else in your .env

OPENAI_API_KEY=your_openai_key

DISCORD_APPLICATION_ID=your_app_id

DISCORD_API_TOKEN=your_bot_token

... etc

```

shouldRespond?} K -->|Yes| L[Skip to Response] K -->|No| M[Evaluate shouldRespond] M --> N[Generate shouldRespond Prompt] N --> O[LLM Decision] O --> P{Should Respond?} P -->|No| Q[Save Ignore Decision] Q --> End3[End Processing] P -->|Yes| L L --> R[Compose Full State] R --> S[Generate Response Prompt] S --> T[LLM Response Generation] T --> U{Valid Response?} U -->|No| V[Retry up to 3x] V --> T U -->|Yes| W[Parse XML Response] W --> X{Still Latest Response?} X -->|No| End4[Discard Response] X -->|Yes| Y[Create Response Message] Y --> Z{Is Simple Response?} Z -->|Yes| AA[Direct Callback] Z -->|No| AB[Process Actions] AA --> AC[Run Evaluators] AB --> AC AC --> AD[Reflection Evaluator] AD --> AE[Extract Facts] AE --> AF[Update Relationships] AF --> AG[Save Reflection State] AG --> AH[Emit RUN_ENDED] AH --> End5[Complete] ``` ## Detailed Step Descriptions ### 1. Initial Message Reception ```typescript // Event triggered by platform (Discord, Telegram, etc.) EventType.MESSAGE_RECEIVED → messageReceivedHandler ``` ### 2. Self-Check ```typescript if (message.entityId === runtime.agentId) { logger.debug('Skipping message from self'); return; } ``` ### 3. Response ID Generation ```typescript // Prevents duplicate responses for rapid messages const responseId = v4(); latestResponseIds.get(runtime.agentId).set(message.roomId, responseId); ``` ### 4. Run Tracking ```typescript const runId = runtime.startRun(); await runtime.emitEvent(EventType.RUN_STARTED, {...}); ``` ### 5. Memory Storage ```typescript await Promise.all([ runtime.addEmbeddingToMemory(message), // Vector embeddings runtime.createMemory(message, 'messages'), // Message history ]); ``` ### 6. Attachment Processing ```typescript if (message.content.attachments?.length > 0) { // Images: Generate descriptions // Documents: Extract text // Other: Process as configured message.content.attachments = await processAttachments(message.content.attachments, runtime); } ``` ### 7. Agent State Check ```typescript const agentUserState = await runtime.getParticipantUserState(message.roomId, runtime.agentId); if ( agentUserState === 'MUTED' && !message.content.text?.toLowerCase().includes(runtime.character.name.toLowerCase()) ) { return; // Ignore if muted and not mentioned } ``` ### 8. Should Respond Evaluation #### Bypass Conditions ```typescript function shouldBypassShouldRespond(runtime, room, source) { // Default bypass types const bypassTypes = [ChannelType.DM, ChannelType.VOICE_DM, ChannelType.SELF, ChannelType.API]; // Default bypass sources const bypassSources = ['client_chat']; // Plus any configured in environment return bypassTypes.includes(room.type) || bypassSources.includes(source); } ``` #### LLM Evaluation ```typescript if (!shouldBypassShouldRespond) { const state = await runtime.composeState(message, [ 'ANXIETY', 'SHOULD_RESPOND', 'ENTITIES', 'CHARACTER', 'RECENT_MESSAGES', 'ACTIONS', ]); const prompt = composePromptFromState({ state, template: shouldRespondTemplate, }); const response = await runtime.useModel(ModelType.TEXT_SMALL, { prompt }); const parsed = parseKeyValueXml(response); shouldRespond = parsed?.action && !['IGNORE', 'NONE'].includes(parsed.action.toUpperCase()); } ``` ### 9. Response Generation #### State Composition with Providers ```typescript state = await runtime.composeState(message, ['ACTIONS']); // Each provider adds context: // - RECENT_MESSAGES: Conversation history // - CHARACTER: Personality traits // - ENTITIES: User information // - TIME: Temporal context // - RELATIONSHIPS: Social connections // - WORLD: Environment details // - etc. ``` #### LLM Response ```typescript const prompt = composePromptFromState({ state, template: messageHandlerTemplate, }); let response = await runtime.useModel(ModelType.TEXT_LARGE, { prompt }); // Expected XML format: /*

User Intent] --> B[Service

EVMService] B --> C[Blockchain

Viem] A --> D[Templates

AI Prompts] B --> E[Providers

Data Supply] ``` ## Core Components ### EVMService The central service that manages blockchain connections and wallet data: ```typescript export class EVMService extends Service { static serviceType = 'evm-service'; private walletProvider: WalletProvider; private intervalId: NodeJS.Timeout | null = null; async initialize(runtime: IAgentRuntime): Promise

User Intent] --> B[SolanaService

Core Logic] B --> C[Solana RPC

Connection] A --> D[AI Templates

NLP Parsing] B --> E[Providers

Wallet Data] C --> F[Birdeye API

Price Data] ``` ## Core Components ### SolanaService The central service managing all Solana blockchain interactions: ```typescript export class SolanaService extends Service { static serviceType = 'solana-service'; private connection: Connection; private keypair?: Keypair; private wallet?: Wallet; private cache: Map

Section: {title}

Content: {chunk}"] ``` ## Rate Limiting & Concurrency ```mermaid graph TD subgraph "Request Queue" R1[Request 1] R2[Request 2] R3[Request 3] RN[Request N] end subgraph "Rate Limiter" RL1[Token Bucket

150k tokens/min] RL2[Request Bucket

60 req/min] RL3[Concurrent Limit

30 operations] end subgraph "Processing Pool" P1[Worker 1] P2[Worker 2] P3[Worker 3] P30[Worker 30] end R1 --> RL1 R2 --> RL1 R3 --> RL1 RL1 --> RL2 RL2 --> RL3 RL3 --> P1 RL3 --> P2 RL3 --> P3 ``` ## Caching Architecture ```mermaid graph TD subgraph "Request Flow" Req[Embedding Request] --> Cache{In Cache?} Cache -->|Yes| Return[Return Cached] Cache -->|No| Generate[Generate New] Generate --> Store[Store in Cache] Store --> Return end subgraph "Cache Management" CM1[LRU Eviction] CM2[TTL: 24 hours] CM3[Max Size: 10k entries] end subgraph "Cost Savings" CS1[OpenRouter + Claude: 90% reduction] CS2[OpenRouter + Gemini: 90% reduction] CS3[Direct API: 0% reduction] end ``` ## Web Interface Architecture ```mermaid graph TD subgraph "Frontend" UI[React UI] UP[Upload Component] DL[Document List] SR[Search Results] end subgraph "API Layer" REST[REST Endpoints] MW[Middleware] Auth[Auth Check] end subgraph "Backend" KS[Knowledge Service] FS[File Storage] PS[Processing Queue] end UI --> REST UP --> REST DL --> REST SR --> REST REST --> MW MW --> Auth Auth --> KS KS --> FS KS --> PS ``` ## Error Handling Flow ```mermaid flowchart TD Op[Operation] --> Try{Try Operation} Try -->|Success| Complete[Return Result] Try -->|Error| Type{Error Type?} Type -->|Rate Limit| Wait[Exponential Backoff] Type -->|Network| Retry[Retry 3x] Type -->|Parse Error| Log[Log & Skip] Type -->|Out of Memory| Chunk[Reduce Chunk Size] Wait --> Try Retry --> Try Chunk --> Try Log --> Notify[Notify User] Retry -->|Max Retries| Notify Notify --> End[Operation Failed] ``` ## Performance Characteristics ### Processing Times ```mermaid gantt title Document Processing Timeline dateFormat X axisFormat %s section Small Doc (< 1MB) Text Extraction :0, 1 Chunking :1, 2 Embedding :2, 5 Storage :5, 6 section Medium Doc (1-10MB) Text Extraction :0, 3 Chunking :3, 5 Embedding :5, 15 Storage :15, 17 section Large Doc (10-50MB) Text Extraction :0, 10 Chunking :10, 15 Embedding :15, 45 Storage :45, 50 ``` ### Storage Requirements ```mermaid pie title Storage Distribution "Document Text" : 40 "Vector Embeddings" : 35 "Metadata" : 15 "Indexes" : 10 ``` ## Scaling Considerations ```mermaid graph TD subgraph "Horizontal Scaling" LB[Load Balancer] N1[Node 1] N2[Node 2] N3[Node 3] end subgraph "Shared Resources" VS[Vector Store

PostgreSQL + pgvector] DS[Document Store

PostgreSQL] Cache[Redis Cache] end LB --> N1 LB --> N2 LB --> N3 N1 --> VS N1 --> DS N1 --> Cache N2 --> VS N2 --> DS N2 --> Cache N3 --> VS N3 --> DS N3 --> Cache ``` ## Summary The Knowledge plugin's architecture is designed for:

Context: Thread + history

Task: Generate reply L->>V: Generated text V->>V: Check length V->>V: Check appropriateness V->>V: Remove duplicates alt Valid response V->>P: Approved response else Invalid V->>L: Regenerate end ``` ### 5. Action Processing Flow ```mermaid flowchart TD A[Timeline Tweets] --> B[Evaluate Each Tweet] B --> C{Like Candidate?} C -->|Yes| D[Calculate Like Score] C -->|No| E{Retweet Candidate?} D --> F[Add to Actions] E -->|Yes| G[Calculate RT Score] E -->|No| H{Quote Candidate?} G --> F H -->|Yes| I[Calculate Quote Score] H -->|No| J[Next Tweet] I --> F F --> K[Action List] J --> K K --> L[Sort by Score] L --> M[Select Top Action] M --> N{Action Type} N -->|Like| O[POST /2/users/:id/likes] N -->|Retweet| P[POST /2/users/:id/retweets] N -->|Quote| Q[Generate Quote Text] Q --> R[POST /2/tweets] O --> S[Log Action] P --> S R --> S ``` ## Timeline State Management ### Cache Structure ```typescript interface TimelineCache { tweets: Tweet[]; users: Map

Loading v1 compatible plugins...

);

}

if (error) {

return (

No v1 compatible plugins found in the registry.

{plugin.name}

{plugin.description && (

{plugin.description}

)}

{plugin.stars > 0 && (

{plugin.tags && plugin.tags.length > 0 && (

{plugin.tags.slice(0, 3).map(tag => (

{tag}

))}

{plugin.tags.length > 3 && (

+{plugin.tags.length - 3} more

)}

)}

• Schema Discovery

• Migration Orchestration] B --> C[Database Adapters] C --> D[PGLite Adapter

• Development

• File-based] C --> E[PG Adapter

• Production

• Pooled] ``` ## Core Components ### 1. Database Adapters * **BaseDrizzleAdapter** - Shared functionality * **PgliteDatabaseAdapter** - Development database * **PgDatabaseAdapter** - Production database ### 2. Migration Service * **DatabaseMigrationService** - Orchestrates migrations * **DrizzleSchemaIntrospector** - Analyzes schemas * **Custom Migrator** - Executes schema changes ### 3. Connection Management * **PGliteClientManager** - Singleton PGLite instance * **PostgresConnectionManager** - Connection pooling * **Global Singletons** - Prevents multiple connections ### 4. Schema Definitions Core tables for agent functionality: * `agents` - Agent identities * `memories` - Knowledge storage * `entities` - People and objects * `relationships` - Entity connections * `messages` - Communication history * `embeddings` - Vector search * `cache` - Key-value storage * `logs` - System events ## Installation ```bash elizaos plugins add @elizaos/plugin-sql ``` ## Configuration The SQL plugin is automatically included by the elizaOS runtime and configured via environment variables. ### Environment Setup Create a `.env` file in your project root: ```bash # For PostgreSQL (production) POSTGRES_URL=postgresql://user:password@host:5432/database # For custom PGLite directory (development) # Optional - defaults to ./.eliza/.elizadb if not set PGLITE_DATA_DIR=/path/to/custom/db ``` ### Adapter Selection The plugin automatically chooses the appropriate adapter: * **With `POSTGRES_URL`** → PostgreSQL adapter (production) * **Without `POSTGRES_URL`** → PGLite adapter (development) No code changes needed - just set your environment variables. ### Custom Plugin with Schema ```typescript import { Plugin } from '@elizaos/core'; import { pgTable, uuid, text, timestamp } from 'drizzle-orm/pg-core'; // Define your schema const customTable = pgTable('custom_data', { id: uuid('id').primaryKey().defaultRandom(), agentId: uuid('agent_id').notNull(), data: text('data').notNull(), createdAt: timestamp('created_at').defaultNow(), }); // Create plugin export const customPlugin: Plugin = { name: 'custom-plugin', schema: { customTable, }, // Plugin will have access to database via runtime }; ``` ## How It Works ### 1. Initialization When the agent starts: 1. SQL plugin initializes the appropriate database adapter 2. Migration service discovers all plugin schemas 3. Schemas are analyzed and dependencies resolved 4. Tables are created or updated as needed ### 2. Schema Discovery ```typescript // Plugins export their schemas export const myPlugin: Plugin = { name: 'my-plugin', schema: { /* Drizzle tables */ }, }; // Migration service finds and registers them discoverAndRegisterPluginSchemas(plugins); ``` ### 3. Dynamic Migration The system: * Introspects existing database structure * Compares with plugin schema definitions * Generates and executes necessary DDL * Handles errors gracefully ### 4. Runtime Access Plugins access the database through the runtime: ```typescript const adapter = runtime.databaseAdapter; await adapter.getMemories({ agentId }); ``` ## Advanced Features ### Composite Primary Keys ```typescript const cacheTable = pgTable('cache', { key: text('key').notNull(), agentId: uuid('agent_id').notNull(), value: jsonb('value'), }, (table) => ({ pk: primaryKey(table.key, table.agentId), })); ``` ### Foreign Key Dependencies Tables with foreign keys are automatically created in the correct order. ### Schema Introspection The system can analyze and adapt to existing database structures. ### Error Recovery * Automatic retries with exponential backoff * Detailed error logging * Graceful degradation ## Best Practices 1. **Define Clear Schemas** - Use TypeScript for type safety 2. **Use UUIDs** - For distributed compatibility 3. **Include Timestamps** - Track data changes 4. **Index Strategically** - For query performance 5. **Test Migrations** - Verify schema changes locally ## Limitations * No automatic downgrades or rollbacks * Column type changes require manual intervention * Data migrations must be handled separately * Schema changes should be tested thoroughly ## Next Steps * [Database Adapters](./database-adapters.mdx) - Detailed adapter documentation * [Schema Management](./schema-management.mdx) - Creating and managing schemas * [Plugin Tables Guide](./plugin-tables.mdx) - Adding tables to your plugin * [Examples](./examples.mdx) - Real-world usage patterns # Database Adapters Source: https://docs.elizaos.ai/plugin-registry/sql/database-adapters Understanding PGLite and PostgreSQL adapters in the SQL plugin The SQL plugin provides two database adapters that extend a common `BaseDrizzleAdapter`: * **PGLite Adapter** - Embedded PostgreSQL for development and testing * **PostgreSQL Adapter** - Full PostgreSQL for production environments ## Architecture Overview Both adapters share the same base functionality through `BaseDrizzleAdapter`, which implements the `IDatabaseAdapter` interface from `@elizaos/core`. The adapters handle: * Connection management through dedicated managers * Automatic retry logic for database operations * Schema introspection and dynamic migrations * Embedding dimension configuration (default: 384 dimensions) ## PGLite Adapter The `PgliteDatabaseAdapter` uses an embedded PostgreSQL instance that runs entirely in Node.js. ### Key Features * **Zero external dependencies** - No PostgreSQL installation required * **File-based persistence** - Data stored in local filesystem * **Singleton connection manager** - Ensures single database instance per process * **Automatic initialization** - Database created on first use ### Implementation Details ```typescript export class PgliteDatabaseAdapter extends BaseDrizzleAdapter { private manager: PGliteClientManager; protected embeddingDimension: EmbeddingDimensionColumn = DIMENSION_MAP[384]; constructor(agentId: UUID, manager: PGliteClientManager) { super(agentId); this.manager = manager; this.db = drizzle(this.manager.getConnection()); } } ``` ### Connection Management The `PGliteClientManager` handles: * Singleton PGLite instance creation * Data directory resolution and creation * Connection persistence across adapter instances ## PostgreSQL Adapter The `PgDatabaseAdapter` connects to a full PostgreSQL database using connection pooling. ### Key Features * **Connection pooling** - Efficient resource management * **Automatic retry logic** - Built-in resilience for transient failures * **Production-ready** - Designed for scalable deployments * **SSL support** - Secure connections when configured * **Cloud compatibility** - Works with Supabase, Neon, and other PostgreSQL providers ### Implementation Details ```typescript export class PgDatabaseAdapter extends BaseDrizzleAdapter { protected embeddingDimension: EmbeddingDimensionColumn = DIMENSION_MAP[384]; private manager: PostgresConnectionManager; constructor(agentId: UUID, manager: PostgresConnectionManager, _schema?: any) { super(agentId); this.manager = manager; this.db = manager.getDatabase(); } protected async withDatabase